Students are doing homework after a full day, and may be caring for siblings, working, and helping out at home. Some of them don’t have adequate tech or space to work. Homework is a second or third shift for them and may be increasing educational inequity.

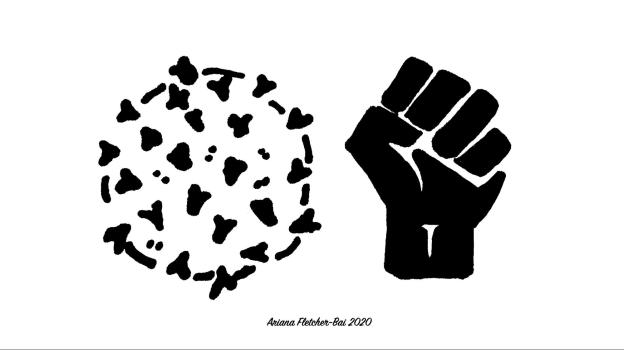

Is ed-tech exacerbating inequity?

I have been thinking a lot about where ed-tech might be exacerbating existing inequity. And that led me to read a colleague’s tweet of “Homework is a Social Justice Issue”, originally published here in 2015. It talks about the underlying assumptions being made when we give homework, especially in K12: that students have the time, background knowledge, and tools to do school work at home. If students are working or taking care of younger siblings, they don’t have the time. If the type of work often lures in parents of affluent students to help, then they probably don’t have the background knowledge yet. And if the homework is on a laptop/phone and requires internet access, or requires space to organize and maintain materials, they may not have the tools. We must take these environmental realities into account when designing and building educational software that will meet the needs of students from all walks of life.

Long hours don’t work.

I recently realized that the people I meet with after 4pm aren’t getting the same creativity and deep listening as people that talk to me at 9 or 10am. It made me wonder why we are asking students to do a second or often third shift, when the research on the harms of long hours to productivity of overwork are so clear (here’s a summary of the harms) and similarly there are real harms to work quality (see this study on long medical shifts). Do you want someone in their 18th hour doing brain surgery on you?

When and what to assign?

So, even IF students have the time, background knowledge, and tools, does it really make sense to ask them to work a second shift? Students do need time to grapple with hard problems, and many students need quiet to work. So it isn’t an easy problem to fix. The article suggests that if you are assigning homework in K12, you should ask yourself these questions.

- “Does the task sit low on Bloom’s Taxonomy? In other words, are students likely to be able to do it independently?

- If not, does the task build primarily on work already performed or begun in class? In other words, have students already had sufficient opportunity to dig deep into the task and work through their difficulties in the presence of peers and/or the teacher?

- Does the task require only the technology to which all students have sufficient access outside of school?

- Can the task reasonably be accomplished, alongside homework from other classes, by students whose home life includes part-time work, significant household responsibilities, or a heightened level of anxiety at home?”

https://modernlearners.com/homework-is-a-social-justice-issue/

How could ed-tech help?

Homework systems and courseware could make it easy and safe for students to provide feedback on their assignments, including individual questions and tasks within their assignments. Rather than focusing so much on giving analytics about students, ed-tech could provide instructors with analytics about the assignments, questions, and tasks they give. Which ones seem to require a lot of prerequisite knowledge that students don’t already have? Which ones seem to help students do well in the course? Which questions behave like “weed-out” questions? Maybe ed-tech should find ways to collect demographic information and measure outcomes to report on inequitable results, while protecting student privacy.

I am interested in hearing your ideas, too.

See you earlier tomorrow! And by the way, I have started making sure that the people that I mostly speak to later in the day occasionally meet with me at an earlier time, so that they get the benefit of my full listening capacity and creative potential.